Optical character recognition (OCR)

by Chris Woodford. Last updated: February 22, 2023.

Do you ever struggle to read a friend's handwriting? Count yourself lucky, then, that you're not working for the US Postal Service, which has to decode and deliver something like 30 million handwritten envelopes every single day!

With so much of our lives computerized, it's vitally important that machines and humans can understand one another and pass information back and forth. Mostly computers have things their way—we have to "talk" to them through relatively crude devices such as keyboards and mice so they can figure out what we want them to do. But when it comes to processing more human kinds of information, like an old-fashioned printed book or a letter scribbled with a fountain pen, computers have to work much harder.

That's where optical character recognition (OCR) comes in. It's a type of software (program) that can automatically analyze printed text and turn it into a form that a computer can process more easily. OCR is at the heart of everything from handwriting analysis programs on cellphones to the gigantic mail-sorting machines that ensure all those millions of letters reach their destinations. How exactly does it work? Let's take a closer look!

Photo: Recognizing characters#1: Can you make out the blue letter "P," beginning the word "Petrus," in this illuminated, hand-written bible dating from 1407CE? Imagine what a computerized optical character recognition program would make of it! Photo courtesy of Wikimedia Commons.

Sponsored links

Contents

What is OCR?

When it comes to optical character recognition, our brains and eyes are far superior to any computer.

As you read these words on your computer screen, your eyes and brain are carrying out optical character recognition without you even noticing! Your eyes are recognizing the patterns of light and dark that make up the characters (letters, numbers, and things like punctuation marks) printed on the screen and your brain is using those to figure out what I'm trying to say (sometimes by reading individual characters but mostly by scanning entire words and whole groups of words at once).

Computers can do this too, but it's really hard work for them. The first problem is that a computer has no eyes, so if you want it to read something like the page of an old book, you have to present it with an image of that page generated with an optical scanner or a digital camera. The page you create this way is a graphic file (often in the form of a JPG) and, as far as a computer's concerned, there's no difference between it and a photograph of the Taj Mahal or any other graphic: it's a completely meaningless pattern of pixels (the colored dots or squares that make up any computer graphic image). In other words, the computer has a picture of the page rather than the text itself—it can't read the words on the page like we can, just like that. OCR is the process of turning a picture of text into text itself—in other words, producing something like a TXT or DOC file from a scanned JPG of a printed or handwritten page.

Photo: Recognizing characters: To you and me, it's the word "an", but to a computer this is just a meaningless pattern of black and white. And notice how the fibers in the paper are introducing some confusion into the image. If the ink were slightly more faded, the gray and white pattern of fibers would start to interfere and make the letters even harder to recognize.

What's the advantage of OCR?

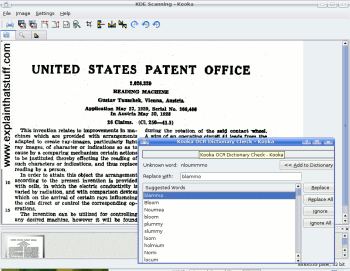

Once a printed page is in this machine-readable text form, you can do all kinds of things you couldn't do before. You can search through it by keyword (handy if there's a huge amount of it), edit it with a word processor, incorporate it into a Web page, compress it into a ZIP file and store it in much less space, send it by email—and all kinds of other neat things. Machine-readable text can also be decoded by screen readers, tools that use speech synthesizers (computerized voices, like the one Stephen Hawking used) to read out the words on a screen so blind and visually impaired people can understand them. (Back in the 1970s, one of the first major uses of OCR was in a photocopier-like device called the Kurzweil Reading Machine, which could read printed books out loud to blind people.)

Photo: Scanning in your pocket: smartphone OCR apps are fast, accurate, and convenient. Left: Here I'm scanning the text of the article you're reading now, straight off my computer screen, with my smartphone and Text Scanner (an Android app by Peace). Right: A few seconds later, a very accurate version of the scanned text appears on my phone screen.

How does OCR work?

Let's suppose life was really simple and there was only one letter in the alphabet: A. Even then, you can probably see that OCR would be quite a tricky problem—because every single person writes the letter A in a slightly different way. Even with printed text, there's an issue, because books and other documents are printed in many different typefaces (fonts) and the letter A can be printed in many subtly different forms.

Photo: There's a fair bit of variation between these different versions of a capital letter A, printed in different computer fonts, but there's also a basic similarity: you can see that almost all of them are made from two angled lines that meet in the middle at the top, with a horizontal line between.

Broadly speaking, there are two different ways to solve this problem, either by recognizing characters in their entirety (pattern recognition) or by detecting the individual lines and strokes characters are made from (feature detection) and identifying them that way. Let's look at these in turn.

Pattern recognition

If everyone wrote the letter A exactly the same way, getting a computer to recognize it would be easy. You'd just compare your scanned image with a stored version of the letter A and, if the two matched, that would be that. Kind of like Cinderella: "If the slipper fits..."

So how do you get everyone to write the same way? Back in the 1960s, a special font called OCR-A was developed that could be used on things like bank checks and so on. Every letter was exactly the same width (so this was an example of what's called a monospace font) and the strokes were carefully designed so each letter could easily be distinguished from all the others. Check-printers were designed so they all used that font, and OCR equipment was designed to recognize it too. By standardizing on one simple font, OCR became a relatively easy problem to solve. The only trouble is, most of what the world prints isn't written in OCR-A—and no-one uses that font for their handwriting! So the next step was to teach OCR programs to recognize letters written in a number of very common fonts (ones like Times, Helvetica, Courier, and so on). That meant they could recognize quite a lot of printed text, but there was still no guarantee they could recognize any font you might send their way.

Photo: OCR-A font: Designed to be read by computers as well as people. You might not recognize the style of text, but the numbers probably do look familiar to you from checks and computer printouts. Note that similar-looking characters (like the lowercase "l" in Explain and the number "1" at the bottom) have been designed so computers can easily tell them apart.

Feature detection

Also known as feature extraction or intelligent character recognition (ICR), this is a much more sophisticated way of spotting characters. Suppose you're an OCR computer program presented with lots of different letters written in lots of different fonts; how do you pick out all the letter As if they all look slightly different? You could use a rule like this: If you see two angled lines that meet in a point at the top, in the center, and there's a horizontal line between them about halfway down, that's a letter A. Apply that rule and you'll recognize most capital letter As, no matter what font they're written in. Instead of recognizing the complete pattern of an A, you're detecting the individual component features (angled lines, crossed lines, or whatever) from which the character is made. Most modern omnifont OCR programs (ones that can recognize printed text in any font) work by feature detection rather than pattern recognition. Some use neural networks (computer programs that automatically extract patterns in a brain-like way).

Photo: Feature detection: You can be pretty confident you're looking at a capital letter A if you can identify these three component parts joined together in the correct way.

Sponsored links

How does handwriting recognition work?

Recognizing the characters that make up neatly laser-printed computer text is relatively easy compared to decoding someone's scribbled handwriting. That's the kind of simple-but-tricky, everyday problem where human brains beat clever computers hands-down: we can all make a rough stab at guessing the message hidden in even the worst human writing. How? We use a combination of automatic pattern recognition, feature extraction, and—absolutely crucially—knowledge about the writer and the meaning of what's being written ("This letter, from my friend Harriet, is about a classical concert we went to together, so the word she's written here is more likely to be 'trombone' than 'tramline'.")

Cursive handwriting (with letters joined up and flowing together) is very much harder for a computer to recognize than computer-printed type, because it's difficult to know where one letter ends and another begins. Many people write so hastily that they don't bother to form their letters fully, making recognition by pattern or feature extremely hard. Another problem is that handwriting is an expression of individuality, so people may go out of their way to make their writing different from the norm. When it comes to reading words like this, we rely heavily on the meaning of what's written, our knowledge of the writer, and the words that we've already read—something computers can't manage so easily.

Photo: The tricky problem of handwriting recognition.

Making it easy

Where computers do have to recognize handwriting, the problem is often simplified for them. For example, mail-sorting computers generally only have to recognize the zipcode (postcode) on an envelope, not the entire address. So they just have to identify a relatively small amount of text made only from basic letters and numbers. People are encouraged to write the codes legibly (leaving spaces between characters, using all uppercase letters) and, sometimes, envelopes are preprinted with little squares for you to write the characters in to help you keep them separate.

Forms designed to be processed by OCR sometimes have separate boxes for people to write each letter in or faint guidelines known as comb fields, which encourage people to keep letters separate and write legibly. (Generally the comb fields are printed in a special color, such as pink, called a dropout color, which can be easily separated from the text people actually write, usually in black or blue ink.)

Artwork: Forms designed for OCR incorporate simple aids to reduce scanning errors, including comb fields (top) and character boxes (middle) printed in a dropout color (pink), and bubble choice fields or tick boxes (bottom).

Tablet computers and cellphones that have handwriting recognition often use feature extraction to recognize letters as you write them. If you're writing a letter A, for example, the touchscreen can sense you writing first one angled line, then the other, and then the horizontal line joining them. In other words, the computer gets a headstart in recognizing the features because you're forming them separately, one after another, and that makes feature extraction much easier than having to pick out the features from handwriting scribbled on paper.